Site Tools

Table of Contents

Stanford CoreNLP Tools: Processing multiple files in a directory

While annotating a single file is sometimes all you want to do, the typical corpus linguistic annotation task is likely to require the annotation of multiple files. So far, we have explored different sets of annotators for annotating a single file. In order to accomplish this, we have used the switch -file followed by the name of a single file to be annotated. The next task is setting up a batch file to run the same annotators on more than one file.

In order to run the Stanford CoreNLP tools on multiple files, we need a list of files to be annotated. So let us create a list of files located in a particular directory. The contents of the file should contain a filename with its directory path per line:

c:\Users\Public\CORPUS-DIRECTORY\corpusfile01.txt

c:\Users\Public\CORPUS-DIRECTORY\corpusfile02.txt

c:\Users\Public\CORPUS-DIRECTORY\corpusfile03.txt

c:\Users\Public\CORPUS-DIRECTORY\corpusfileNN.txt

…

Using DIR or LS to list files

Let us first of all remind ourselves of what we already know:

In order to list all text files in a directory, we use the dir command in the Windows terminal (cmd.exe) or ls in the shell of UNIX-like operating systems (Linux, Mac OS) or the Windows Powershell. However, the output provided by issuing a plain dir or ls command is not quite what we need in terms of a list of files with their full path and nothing else.

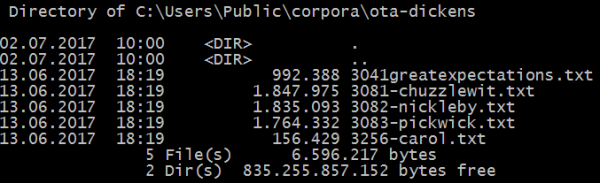

Windows command output generated by running the command dir:

This is not quite what we need as an input file for the CoreNLP tools. So let us find out how we can get the output we need for the CoreNLP tools. With dir and ls, we are on the right track, but need to modify those commands in order to get the correct output format. Fortunately, the commands can take parameters specifying the output format of the commands.

Commands according to shell types and operating systems

Microsoft Windows Windows command prompt ("Eingabeaufforderung") (cmd))

dir /B /S *.txt > filelist.lst

writes the desired output of all text files with their full paths, one entry per line to the file filelist.lst which can serve as the input file for the CoreNLP tools.

dir calls the function listing the contents of a directory

/B

/S

*.txt lists only files with the extension .txt

> pipes the output to a file instead of standard output (terminal)

filelist.lst is the target file to which the output of the command is written

Windows Powershell

(Get-ChildItem C:\CORPUS-DIRECTORY\ -Recurse).fullname > filelist.lst

or shorter:

(gci -r C:\CORPUS-DIRECTORY\).fullname > filelist.lst

If you are already in the directory with your corpus files:

(gci -r ./*.txt).fullname > filelist.lst

Unix-style OSes: Linux and Mac OS

Unix-style operating systems such as Linux or Mac OS: /

ls -d -1 $PWD/*.* > filelist.lst

Using the filelist in CoreNLP

To annotate the files in the file filelist.lst, we have to make known to CoreNLP that this is your list of annotation target files. We do this by means of the parameter -filelist followed by the name of the filelist.lst with its full path if it is not located in the CoreNLP directory. Note that the parameter -filelist is used here instead of -file which we used to address just a single file:

:: Stanford Core NLP batch file to be called from the commandline

:: calls the Stanford Core NLP Tools with input files from a specified directory (-filelist) and writes output to a directory of the user's choice (-outputDirectory)

:: note that this whole call must be on one single line; the line breaks in the text below are merely displayed for layout purposes

java -cp “*” -Xmx2g edu.stanford.nlp.pipeline.StanfordCoreNLP -annotators tokenize,ssplit,pos,lemma,ner,parse,dcoref -filelist “D:\CORPUS-DIRECTORY\filelist.lst” -outputFormat xml -outputDirectory “D:\CORPUS-DIRECTORY\core-nlp-output”

The last parameter used here is called -outputDirectory and allows you to tell the CoreNLP tools where to write the annotated output files. It needs to be followed by a path to an existing output directory. This can be located in a directory parallel to the directory with the input files so that you can use this directory as a source for any further processing. Keep annotated files separate from original corpus files is a very good idea in order to keep some order in your file system.

Please note that if you are processing multiple longer files you may have to increase the amount of allocated memory by increasing the value of the parameter

-Xmx2g

to 4 or even more gigabytes depending on how much RAM you have on your machine. Please also be aware that also your operating system and any other processes running on your machine will use up some RAM, so in reality, you cannot allocate all available RAM to CoreNLP. Many processing pipelines especially for languages other than English require lots of memory.

Except where otherwise noted, content on this wiki is licensed under the following license: CC Attribution-Share Alike 4.0 International

Except where otherwise noted, content on this wiki is licensed under the following license: CC Attribution-Share Alike 4.0 International